QA Automation • April 18, 2026

The Real Cost of a Bug That Reaches Production in 2026

A production bug costs 10x–100x more to fix than one caught during testing. Here's what it actually costs your team — and how to stop it from happening.

TL;DR

A bug caught during development costs around $100 to fix. The same bug found in production costs up to $10,000 — and that's before you factor in downtime, lost users, and engineering time diverted from building features. Poor software quality costs U.S. companies an estimated $2.41 trillion annually. Most of it is preventable.

The Bug Nobody Talks About

Every software team knows bugs happen. What most teams don't fully reckon with is the difference between a bug caught before release and one that slips through.

The fix itself might take the same two hours either way. But a production bug doesn't just need a fix — it needs emergency triage, customer communication, a hotfix deployment, regression validation, and sometimes a public postmortem. By the time it's over, that two-hour fix has consumed two days of your team's focus.

And that's a small bug.

The Numbers Are Worse Than You Think

According to the Consortium for Information & Software Quality (CISQ), a body co-sponsored by Carnegie Mellon University, the cost of poor software quality in the United States has reached an estimated $2.41 trillion. That number includes failed projects, legacy system problems, cybersecurity failures, and operational glitches — all downstream consequences of defects that weren't caught early enough.

On a per-company level, according to the Quality Transformation Report, poor software quality costs companies over $1 million annually on average. In the U.S., 45% of businesses report losses above $5 million per year.

The cost per minute of downtime is equally brutal. Gartner estimates average downtime costs $9,000 per minute. For large e-commerce sites, these numbers explode — Amazon loses around $200,000 per minute during peak traffic outages, and a 13-minute outage once cost them $2.6 million.

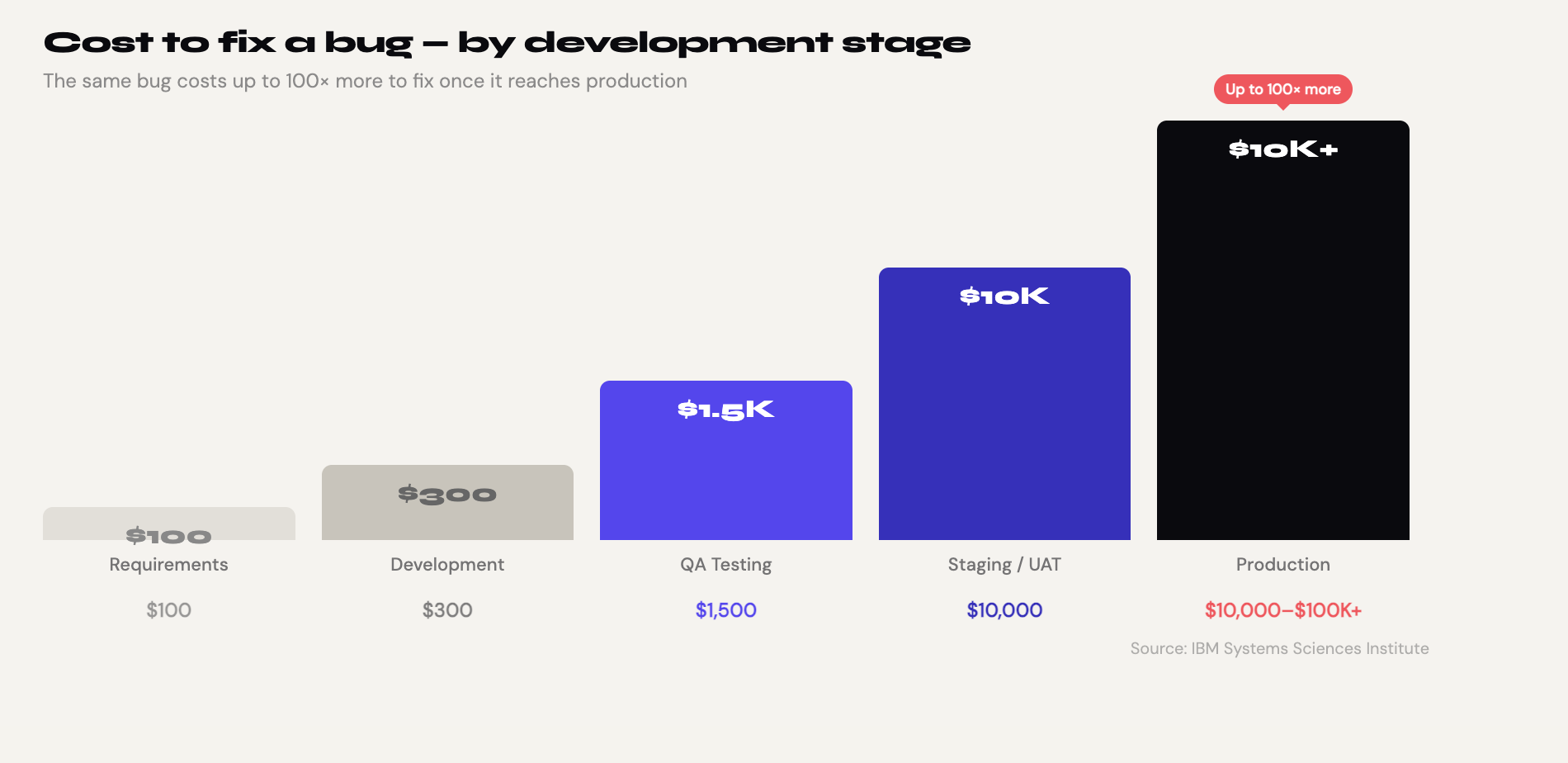

Why the Same Bug Costs 100x More in Production

The core reason is compounding. A bug doesn't exist in isolation — it interacts with users, data, downstream systems, and your team's attention. The further a defect travels through the development lifecycle, the more systems it touches and the more expensive it becomes to unwind.

The Systems Sciences Institute at IBM reported that the cost to fix an error found after product release was four to five times as much as one uncovered during design, and up to 100 times more than one identified in the maintenance phase. A bug found in the requirements-gathering phase might cost $100. The same bug found in QA testing costs $1,500. By production, that figure reaches $10,000.

Here's what that looks like visually:

| Stage | Cost to Fix |

|---|---|

| Requirements / Design | ~$100 |

| Development | ~$300 |

| QA / Testing | ~$1,500 |

| Production | ~$10,000 |

| Post-release (with customer impact) | $10,000–$100,000+ |

Source: IBM Systems Sciences Institute

The reason for this escalation is the domino effect. When a bug is found in production, the code needs to go back to the beginning of the SDLC so the development cycle can restart. The fix itself can bump back other code changes, which adds cost to those changes as well.

The Hidden Costs Nobody Puts in the Bug Report

The $10,000 figure above is just the direct cost. The real damage goes deeper.

Lost users. 68% of users will abandon an application after encountering just two software bugs or glitches. And 88% of users are less likely to use a company's app if they've had a bad experience with it. In a competitive SaaS market, you rarely get a second chance.

Developer productivity. A single interruption, such as an urgent bug report from production, can take a developer up to 23 minutes to recover from and regain full focus, according to research from the University of California, Irvine. Multiply that by five engineers pulled into an emergency call, and you've lost the better part of a morning.

Opportunity cost. Teams spend 30–50% of sprint cycles firefighting defects instead of building new features. Every sprint your engineers spend patching production is a sprint they're not spending on the roadmap that drives growth.

Reputation damage. 81% of consumers lose trust in brands following major software failures. Unlike a bug, reputation damage doesn't come with a patch.

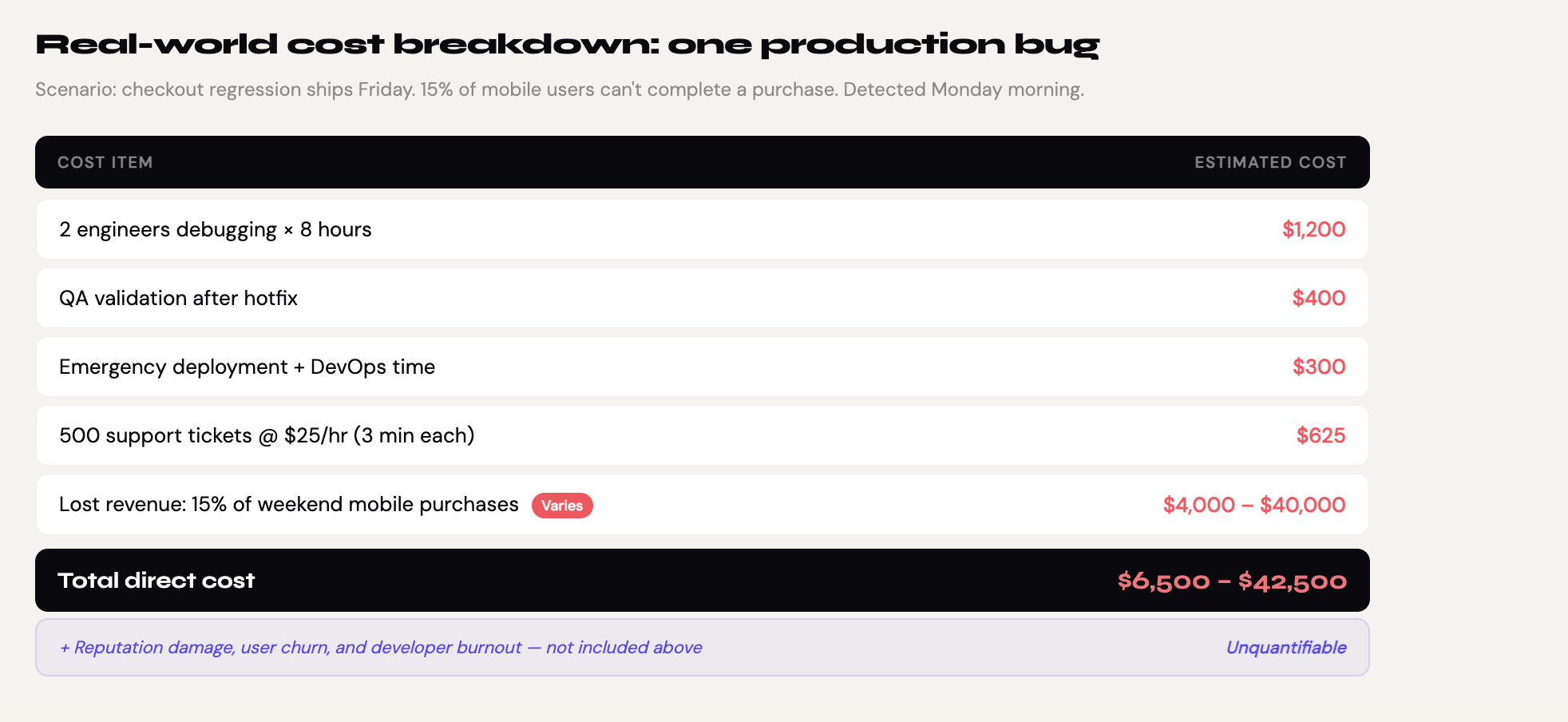

A Real-World Example: What a Critical Bug Actually Costs

Let's put concrete numbers on a scenario that plays out at mid-size SaaS companies every week.

Imagine a checkout regression ships to production on a Friday afternoon. It's a subtle bug — 15% of users can't complete a purchase on mobile. It goes undetected until Monday morning when support tickets spike.

Here's what the actual cost looks like:

| Item | Estimated Cost |

|---|---|

| 2 engineers debugging for 8 hours | $1,200 |

| QA validation after hotfix | $400 |

| Emergency deployment + DevOps time | $300 |

| 500 support tickets (3 min each at $25/hr) | $625 |

| Lost revenue: 15% of weekend mobile purchases | $4,000–$40,000 |

| Reputation damage / churn | Unquantifiable |

| Total direct cost | $6,500–$42,500 |

And this is a bug that was fixable. The checkout flow had been manually tested before the release — but the mobile edge case was missed because nobody had time to re-test everything after the last-minute UI changes on Thursday.

Why "We Test Before We Ship" Isn't Enough

Most teams do test. The problem isn't intent — it's coverage and consistency.

Manual regression testing has three fundamental weaknesses:

It doesn't scale. As your product grows, the surface area for bugs grows with it. A team of two QA engineers physically cannot cover every flow before every release. Something always gets cut.

It's inconsistent. Manual testing depends on who's testing, how tired they are, and how much time they have. The same tester will miss different things on different days.

It slows you down. 78% of development teams now use automated testing tools to catch bugs before deployment — precisely because manual testing at speed is unsustainable. Teams that rely on manual regression end up releasing less frequently, not more carefully.

On average, a website in 2024 has approximately 20–25 bugs at launch, and 85% of website bugs are detected by users rather than during the testing phase. If users are your QA team, you've already lost.

The Shift-Left Principle: Catch Bugs Where They're Cheap

The concept of "shift-left testing" is simple: move quality checks as early as possible in the development cycle, where defects are orders of magnitude cheaper to fix.

Applied to regression testing specifically, this means running automated tests against every build — not just before a major release, but continuously. Every pull request. Every deployment to staging. Every Friday afternoon before the weekend.

The math works out immediately. If a regression suite catches one production bug per sprint that would otherwise have cost $10,000 to fix, and the suite costs $200/month to run, the ROI is 50x.

How No-Code Testing Makes This Accessible to Every Team

The traditional objection to automated regression is that it requires engineering resources to build and maintain. Writing Cypress or Playwright tests takes time, and maintaining them as the UI changes takes even more.

This is where no-code E2E testing tools change the equation. Instead of writing scripts, your QA team records user flows directly in the browser — clicking through the app as a real user would. The tool captures each action and replays it automatically on every future run.

The result is a regression suite that any QA engineer, PM, or developer can build and maintain, without touching a line of code. A test that would take a day to write in Cypress takes two minutes to record.

The comparison:

| Parameter/Type | Manual QA | Cypress/Playwright | No-code E2E (E2Easy) |

| Setup time | None | Weeks | Under 5 minutes |

| Who can write tests | Anyone | Automation engineers | Any team member |

| Runs before every release | Only if someone does it | With setup | Scheduled automatically |

| Cost | $50–80/hr per tester | $100K+/yr engineer | Free during beta |

The Actual Prevention Playbook

Reducing the cost of production bugs isn't about testing more — it's about testing smarter and earlier. Here's what high-performing teams do differently:

Start with your most critical flows. You don't need 500 automated tests. You need 10–15 tests covering the flows that, if broken, would immediately damage revenue or trust: login, sign-up, checkout, key feature workflows.

Run regression automatically before every release. The goal is zero manual steps between "code merged" and "regression validated." Schedule your test suite to run on every deployment to staging.

Make the results visible to everyone. When a test fails, the whole team should know within minutes — not when a user files a support ticket. Connect your test results to Slack or your CI/CD pipeline.

Treat a failing test as a blocked release. A regression failure before release is a success — it means the system worked. Treat it as a blocker, not a nuisance.

FAQ

How much does a production bug actually cost on average? It depends on severity and company size, but the range is wide. A minor UI bug might cost a few hundred dollars in engineer time. A critical bug affecting core functionality — checkout, authentication, data integrity — can easily cost $10,000 to $100,000 when you factor in downtime, lost revenue, support costs, and reputation damage.

Why do so many bugs reach production despite QA teams? Manual testing doesn't scale with release frequency. As teams ship faster, manual regression gets compressed or skipped. The most common failure mode is a "we'll test the main flows" approach that misses edge cases, mobile variants, or recently-changed adjacent features.

Is automated testing worth the investment for small teams? Yes — and it's especially valuable for small teams precisely because they have less capacity for manual testing. A five-person team that automates its core regression flows saves dozens of hours per sprint and ships with significantly more confidence.

What's the difference between unit tests and E2E tests? Unit tests check individual functions in isolation. E2E tests simulate a real user going through a complete flow — signing up, completing a checkout, resetting a password. Both matter, but E2E tests catch the category of bugs that unit tests can never find: the ones that only appear when all the parts are connected and a real person is using them.

Can a QA engineer without coding skills set up automated regression? With no-code testing tools, yes. Tools like E2Easy let any QA engineer record browser tests by clicking through the app — no scripting required. The recorded test replays automatically on every run.

The Bottom Line

A bug in production isn't just a technical problem. It's a business problem with a price tag that compounds with every hour it goes undetected: lost revenue, lost users, lost engineer focus, and sometimes lost trust that never fully comes back.

The good news is that the cost curve works just as well in reverse. Every bug caught in testing — before it reaches a single user — is a bug that costs $100 instead of $10,000.

The teams that ship fast and ship confidently aren't doing more testing. They're doing the right testing at the right time, automatically, before every release.

Ready to stop catching bugs in production? Start your free 30-day trial of E2Easy →

Author: Kyrylo Somochkin, CMO at E2Easy | Date: April 18, 2026